The Language Monolith: How AI Reveals the Hidden Structure of Words

The Language Games We Play And How AI Learned the Rules

The artefact

In the movie “2001: A Space Odyssey” the monolith is a mysterious artefact which appears to be left behind by some advanced alien civilization. The purpose of the monolith is never made clear but it seems to be a technological cheat sheet, a spark notes from the future, that enables us to play catch-up in a technological race in which, up till then, we believed we were the runaway leaders.

With its launch in November 2022, ChatGPT seemed somewhat similar to this fictional monolith. Suddenly, here was an enigmatic artefact that appeared to be more advanced than anything we had seen before. Like the monolith, it could be seen as a gift from the future, forcing us to confront questions about intelligence, consciousness and the limits of what machines are capable of.

To answer these questions we have poked and prodded at ChatGPT, and specifically the Transformer architecture which underlies all generative models like ChatGPT and Anthropic models like Claude, to try and understand its inner workings and unlock its secrets like archaeologists trying to decode an ancient text.

We are doing this because we do not understand why models like ChatGPT are so good at generating language and solving tasks that previously seemed beyond the capabilities of computer based models. If we understand how Transformers work, the thinking goes, we should be able to explain whether these models have human-like intelligence, whether they can reason and maybe even understand if they have something akin to consciousness.

But what if the answer is not hidden in the inner workings of neural networks like Transformers. What if these models and algorithms are merely tools which enable us to decode the true artefact, human language.

To be explicit, the premise is that models like ChatGPT merely exploit the signal encoded in language by the way we use it when interacting with the world around us. The secret is not hidden in the code or the silicon, but in human language itself because it acts as a mechanism for encoding intelligence and reasoning. We should thus ensure we look at language not as a mere medium of communication but as a technology, in and of itself, which we need to decode.

This is not to say we should ignore ChatGPT. We still need to understand its inner workings as best we can. But we need to balance that with trying to understand why language works the way it does? We need to view language like an alien artefact that has remained hidden to us and was suddenly unearthed in a recent archaeological dig.

This artefact was not hidden from everyone, though. Two individuals in particular, the mathematician and engineer Claude Shannon, and the philosopher Ludwig Wittgenstein, showed us that we should pay more attention to language and, as a result, not be surprised that they enable Large Language Models (LLMs) to perform so impressively at previous exclusive skills such as language and reasoning.

The Human Language Model

Claude Shannon discovered something special about language and created ChatGPTs predecessor many decades before we had ever heard of Open AI or Anthropic. Shannon did not have the computing resources we have today, so when he created the world's first Language Model (LM) he had to use the only resources he had at hand, namely his library and his intuition. Hence, the missing L from the LLM, it was decidedly not “large”.

As we will discuss, Shannon’s main discovery was that language exhibits patterns. And we can use this pattern, this "hidden structure", to quantify the information content of a message. His primary focus was on the “hows” of language: how it is constructed, how it can be predicted, and how it can be used more efficiently. His goal was to improve the transmission of information, making it faster, more efficient, and much more reliable. Shannon was less concerned with the “why” behind these patterns, as his interests lay in the practical applications of encoding and transmitting information rather than exploring the deeper origins or purposes of language.

But the “why” is important, why does language follow these patterns in the first place? The philosopher Ludwig Wittgenstein will help us understand how these patterns could emerge because language is a social activity.

It's just a game

To understand the “why” we will show that Wittgenstein’s insight was that the rules and structure Shannon described stemmed from the way we use language; specifically, what Wittgenstein called “language games”. These are the games we play in order to convey our inner meaning to those around us. Like any game, each language game had a unique set of rules and these rules were tied to the context and purpose of that specific situation. There were no universal “rules” as such. Using Wittgenstein’s description, we will attempt to show how we could think of these games as billions, or trillions, of training simulations occurring all around us, testing and refining language into something we can use to communicate effectively with each other. In effect, embedding the signals that models like ChatGPT would later discover.

Wittgenstein believed that context, such as our environment, or the situation we are in, is central to understanding the “why” of language: why do we see some patterns in language? Why do some words seem more likely than others in a given context?

By examining the insight of these two thinkers, we can begin to see that the monolith did not arrive with ChatGPT. And, spoiler, it wasn’t left by some advanced alien civilization or hidden in some technological breakthrough. Instead, it has been with us all along, hidden in the structure of human language itself.

The Language of Information

When we think about language models we think about Machine Learning (ML) models like ChatGPT or Claude. But the first LM was not some abstract computer algorithm, but an engineer and mathematician who loved unicycles, juggling and building things like the world's first wearable computer, the world's first chess program and, rather unusually, a flame throwing trumpet. This man was Claude Shannon.

Shannon worked at the world renowned Bell labs in America. Bell labs is famous for inventing everything from the transistor to the UNIX operating system. At the time Shannon’s work at Bell labs was mainly concerned with the phone network and how to transmit messages. Specifically they were concerned with how many messages they could transmit and how well they could transmit those suitcases. Things like noise and interference meant that even if you could transmit the message it might not be understood at the other end. Shannon believed that there was a way to represent the information being transmitted along a phone line mathematically. If that worked, then Shannon could, in theory, represent any type of information mathematically, regardless of its content. But first he needed to understand language.

Satchels and Suitcases

When you interact with models like ChatGPT you give it some text. For ChatGPT this can be in the form of a prompt or a question or an instruction. The model itself does not “read” the text like you are reading this text. Instead, the model needs to encode or represent the text prior to any other subsequent action. This task is usually undertaken by a model known as a tokenizer. But we won’t get into that in detail here in this post. All you need to know for now is that the tokenizer creates vectors which represent the text in the input.

Similarly, Shannon needed some way to represent or encode the information contained in the messages he was trying to transmit. Although he was not sending it to a LM he was sending it from one machine to another in the form of phones.

You might think of measuring information in terms of pages and words. For example, a blog post can contain 3,000 words, or a book can be 500 pages long. We use these as heuristics to get an idea for how much information they may contain.

The word or page count, however, does not really tell us about the amount of information these sources actually contain. Stanley Kubrick's secretary typed up a manuscript of hundreds of pages for the film “The Shining”. Each page contained different variations of the same satchel, “all work and no play makes Jack a dull boy”. There were alot of words and pages in that manuscript, but did it really contain a lot of “information”?

Shannon thought about this and came to the conclusion that the amount of information contained in any message is related to the amount of uncertainty that message eliminates. How much knowledge does a 500 page manuscript containing the same sentence repeated over and over convey? What new knowledge do we learn about the world? The 3,000 words blog post could be an insightful review of the potential impact of the latest AI technology on society. It might tell us about things we did not previously know, thus reducing our uncertainty.

Three cheers for redundancy

What does this have to do with ChatGPT? Well, Shannon believed that human language was actually a poor method of eliminating uncertainty. In Shannon’s words, language contains a large amount of redundancy. For example, remember those strange references to suitcases and satchels earlier? You might have noticed them since you believed that there was some error. Well here they are again for reference:

- Specifically they were concerned with how many messages they could transmit and how well they could transmit those suitcases

- Each page contained different variations of the same satchel — “all work and no play makes Jack a dull boy”.

It should be obvious that the correct words were

- Suitcases -> messages

- Satchel -> sentence

Why is it so obvious what the next word should be? Shannon argued that this predictability arises from the redundancy of the English language. Often, we don’t even need to finish a sentence to understand what someone is trying to say. This redundancy exists not only at the word level but also at the character level, as demonstrated by our ability to understand the following sentence:

- Mst ppl hv lttl dffculty n rdng ths sntnc

Shannon believed that over 80% of the English language we use is redundant. So you could rewrite any message with only 20% of the characters and still make the message comprehensible.

Another way to think about it is that language isn’t completely random, nor is it totally predictable. There are options, you are just not 100% free to choose anything. In other words, language is stochastic. And, unbeknownst to all of us, including, perhaps, Shannon himself, he had discovered the first piece of the puzzle that would eventually lead to ChatGPT. As Jimmy Soni and Rob Goodman note in their captivating book about Shannon, A Mind at Play, “from the perspective of the information theorist, our languages are hugely predictable – almost boring”. To a machine learning model a hugely predictable dataset is love at first sight. Unfortunately, Shannon lacked the computing power to test his theory at the time, but that didn’t stop him.

The world's first language model?

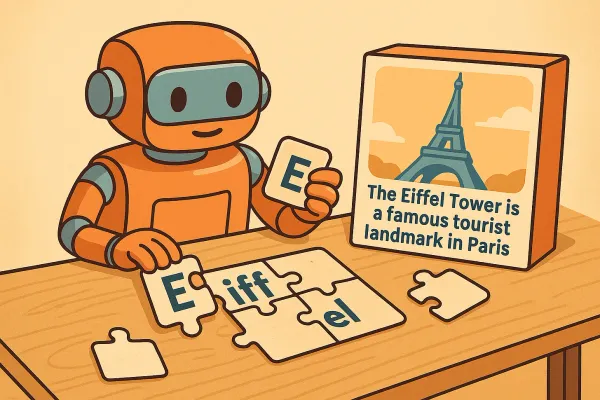

To prove that language was stochastic, Shannon set out to create sensible-looking sentences using a simple algorithm that relied on our limited freedom to choose the next character or word. Essentially, he aimed to show that if language was stochastic, he could find patterns to predict the next word.

Shannon began with a simple approach. He used a random number book (yes, that is how you generated random numbers back then!) and picked numbers between 1 and 27 which he used to pick from a 27 number alphabet (26 letters and 1 for space). This was a random approach and, naturally enough, resulted in something approximating noise.

He then started with the easiest pattern he could think of: the frequency of letters. E occurs more frequently than Q and so on. So now, armed with his random number book he was able to choose letters based on a letter frequency distribution. This still wasn’t great since we know that some letters are more or less likely to appear after certain letters. It’s not just down to their individual frequency distributions.

Instead of working out the frequency distributions for “bigrams” or two letter combinations — for example, how often does the combination “th” or “le” occur— Shannon, lacking the computing resources we take for granted today, used a cruder but innovative alternative.

He picked a random letter from a book, opened another page to find that letter, and noted the next one. He repeated this process but started with pairs of letters, then randomly found another occurrence and recorded the next letter. As he refined this, the resulting passages increasingly resembled regular English.

Then Shannon extended this method to words. Starting with a random word, he found occurrences of it on random pages and noted the next word. Using this method Shannon generated the following passage:

The head and in frontal attack on an english writer that the character of this point is therefore another method for the letters that the time of who ever told the problem for an unexpected.

This basic process enacted by Shannon could be considered to be an early ancestor of ChatGPT , a simple algorithm producing text that resembles English. While the sentence makes no more sense than “colorless green ideas sleep furiously” that wasn’t the point. Lacking the computing resources we have today, Shannon could not learn the complex probability distributions about word usage.

The point is, Shannon proved that there was something there, even if only syntactically: a fundamental limit on our freedom to use language. We might think there are no constraints on how we express our innermost thoughts, but Shannon showed that even these are bound by certain rules and patterns. But what Shannon was less interested in understanding is why language exhibited these underlying patterns? Why was it structured in this way? What does it say about how humans use language if it results in language having this stochastic feature?

AI and Humans Play the Same Language Game

Ludwig Wittgenstein was a philosopher who sought to understand the role language plays in shaping thought, structuring communication and defining the boundaries of meaning. While studying at Cambridge under Bertrand Russell, he developed a theory which claimed that all meaningful statements must correspond to logical structures which mirror reality.

However, later in life, Wittgenstein returned to Cambridge, rejected his earlier views, and developed an entirely new theory of language. This is the version we will discuss here.

At the heart of this new theory was the concept of language games. Wittgenstein argued that language is not a fixed system with universally agreed-upon definitions, nor does it have an innate or static structure. Instead, it is a dynamic system shaped by how humans use it to navigate and interact with the world.

Put simply, language is an activity, not just a system of labels and this activity is what Wittgenstein called a “language game”.

Wittgenstein deliberately called this activity a “game” to emphasize that language follows rules shaped by context. This of other games such as chess, football, or poker, each has its own set of rules that make sense within the game but would seem arbitrary outside of it. The rules of poker, for example, are entirely specific to the game’s goal and structure and don’t apply to chess or football.

If you observed a game long enough, you could probably figure out its rules just by watching. Similarly, even without playing, you could infer the structure of a game by reading reports about it, who won, what moves were made and what strategies were used. Wittgenstein argued that language works in much the same way. We learn its rules not by memorizing definitions, but by seeing how words are used in different contexts.

The hidden context of language

Wittgenstein claimed that language is not fixed — its meaning is dynamic and shifts depending on the situation. We can see this in everyday language, where the same word can take on radically different meanings depending on the context in which it is used.

For example, in a software development context or “game” as Wittgenstein would describe it, the phrase “This is really bad, there are bugs all over the place” clearly refers to software bugs or specifically, errors in a program.

However, in a different language game, such as pest control, the same sentence would have an entirely different meaning. Here, “bugs all over the place” would literally refer to an infestation of insects.

The same principle applies to other words. If a photographer says, “the light is perfect for the shot” they likely mean that the conditions are ideal for capturing a photo. But in a hunting context, “shot” would refer to firing a weapon. The word itself has no fixed meaning, it derives meaning from how it is used in a particular situation.

Another key aspect of context is that it makes some words more probable than others. For example, in hunting or photography, the phrase “the light is perfect for the …” is far more likely to be completed with “shot” than with “meal”, “meeting”, “film” or “performance”. While these words make sense in other contexts, they feel out of place in this one.

Identifying Shannon’s Structure

This is the “why” of language — the reason behind the patterns that Shannon identified. The rules of a language game shape which words are more likely to appear in certain contexts. These rules are not explicitly agreed upon but are instead implicitly understood through shared experience. When we engage in conversation, we assume that others already understand these contextual rules, whether in photography, hunting, software development, or pest control.

Wittgenstein’s idea of language games illustrates that language is an activity, not merely a system of labelling objects in the world. It is not a mirror of reality but a tool for defining meaning through use. This is a crucial distinction and one worth pausing to reflect on.

If language were merely a “labelling system” then ChatGPT likely wouldn’t work. Or, at the very least, it would not perform anywhere near the level we see today. Instead, it would resemble something closer to the “stochastic parrot” criticism — where models like ChatGPT are dismissed as mere statistical machines, storing and retrieving text without understanding, much like John Searle's Chinese Room experiment.

If we learned language by simply pointing at things and naming them, then our understanding of language would be nothing more than a static lookup table. You would look at a phone on a desk and say “phone” and the person learning would just record this as a fixed definition.

But language doesn’t work this way and it's unlikely ChatGPT does either.

Consider the word “phone”. If there were a 1-to-1 relationship between things in the world and words then how would you indicate you need to “phone someone”? Would you point at someone holding a phone? How would the person know you are not referring to the physical device itself? Similarly, how would you point at “thinking”? Would you gesture toward someone in the philosopher's pose and expect them to infer that you mean the act of thinking itself?

Instead, Wittgenstein claims, we learn the meaning of “phone” through usage. If someone is standing near a desk and the phone is ringing and they say “phone” then it likely means something like “your phone is ringing, you should answer it”. If someone suddenly runs to your desk and seems animated and shouts “phone” then it likely means something like “give me a phone, this is important or this is an emergency”. Similarly, when crossing a busy street where cars are idling at traffic lights and someone shouts “green” as you start to cross then they likely mean something like “stop, the traffic lights are green and the cars are moving and will likely hit you if you cross”. In this way we could associate the word “green” with something like “stop” whereas we generally define it to mean “go” in the context of traffic lights.

Through repeated exposure to language games, we implicitly learn how meaning shifts across different contexts without needing explicit instruction.

Encoding context for ChatGPT to discover

In everyday conversation, physical context helps us infer meaning effortlessly. If I am standing near a desk and you say “phone” or “mouse”, I don’t need further clarification, I can infer what you mean from our shared environment.

Models like ChatGPT, however, lack this physical proximity. They cannot rely on real-world context to resolve ambiguity in language.

But that doesn’t mean they can’t learn context. Instead of direct sensory experience, these models extract meaning from vast amounts of textual data such as books, forums, scripts and more. The redundancy and patterns Shannon identified ensure that human language implicitly encodes more context than we realize, allowing AI models to compensate for their lack of physical experience.

This also explains why ChatGPT requires far more training data than a human child to reach similar levels of linguistic competence. Humans “cheat” by using the real world as a built-in training set. We absorb language not just from text and speech but from direct interaction with our surroundings, an extra layer of meaning that AI lacks.

Given Wittgenstein’s later theory of language as a game, you might assume he would have predicted the rise of models like ChatGPT. But, in fact, he did not believe AI like this would ever be possible.

Before concluding, let’s examine why Wittgenstein thought we could never truly understand AI models.

The Lion Speaks Tonight

Wittgenstein famously noted that “if a lion could speak, we could not understand them”. He meant that understanding language isn’t just about knowing words. It requires sharing a world, a “form of life”.

Even if a lion somehow spoke human words, we would not share the same world. Without a common context, understanding would be impossible. In Wittgenstein’s terms, we would not be playing the same language games.

From his perspective this makes sense. In Wittgenstein’s time, language was deeply tied to lived experience and there was no internet, no vast corpus of text capturing human interaction the way modern training data does for AI. In Wittgenstein’s claim it just so happens the lion and humans “speak” the same language. It is not clear that the lion learned to speak by consuming vast amounts of text data like modern LLMs.

Without access to human experiences or culture, the lion could not infer the hidden rules that structure our language games. Its “form of life”, its way of existing in the world, would be completely alien to ours. As a result, even if we spoke the same words, we would not truly understand each other.

But what Wittgenstein did not anticipate is that, one day, there could be a lion trained on the text of every conceivable human language game. And that this lion would have an extraordinary amount of computational resources at its paws.

While we cannot say that models like ChatGPT “understand” us in a human sense, we can say something Wittgenstein likely never imagined: They can speak our language, and we can have intelligible conversations with them.

The Language Monolith

As we noted earlier, to understand ChatGPT, we must also understand human language and the signals it encodes. ChatGPT was able to identify the statistical patterns that Shannon discovered, recognizing that human language is not random but structured and, to some extent, predictable. More importantly, it was able to exploit what Wittgenstein described as the implicit rules of the language games we play every day.

These rules manifest as patterns within specific domains of language. Meaning is determined not by fixed labels, but by how words are used in different contexts. For example, when people use the word “catch” in the context of sports, the model learns associations with balls, scores, fields, winning, losing, fouls and so on.

But when it encounters “catch a fish” the associations shift since it likely now links to words like rods, rivers, boats, baits and lures and so on that it would have encountered during training. The language game of fishing is distinct from the language game of football and ChatGPT, like us, learns to distinguish between them. In this way, ChatGPT infers the “form of life” embedded in human language, allowing us to understand this particular species of silicon based lion.

Where are the answers hidden?

We may never fully explain how models like ChatGPT work. We may never grasp the intricacies of their non-linear transformations, attention mechanisms and learned representations. But even if we did, would that truly answer the deeper questions we are asking?

Shannon and Wittgenstein both pointed us toward a different mystery – the nature of language itself:

- How do children learn language with so much less data than LLMs?

- How can we define even simple concepts if no “pure” form of one exists?

- Does the way we use language enable us — or force us — to create models of the world by figuring out what features apply to which language game?

- How do we understand and use words, even when their meaning shifts across different contexts?

- What does the way we, as humans, use language reveal about intelligence and consciousness?

When we step back and ask these questions, we realize something profound: we may know as little about language as we do about LLMs.

Perhaps the real monolith, the one we have been trying to decode all along, is not ChatGPT at all, but human language itself.

This essay was originally published on Substack and is republished here on The Meaning Algorithm.