External Publications

Jump to section

Philosophy AI Industry BlogsPhilosophy Now Blog

Bored With Time? | Issue 65 | Philosophy Now

Cathal Horan is distracted by the eternal search for meaning.

Psychoanalysis & Philosophy (I) | Issue 68 | Philosophy Now

Cathal Horan analyses Freud through the eyes of Hegel and Schopenhauer.

Intercom Blog

A future without code

As technology matures, our software products and services are evolving without everyone knowing coding languages. The future will be a world without code.

The programming of everyday things

Programming languages are a lot like human languages – they influence the way we interact with the world around us. And especially how we use products.

The golden mean of design and engineering

For great products to be built, both design and engineering need to be in balance. If you have a super strong engineering team but a weak or underserved design team, you will struggle.

An engineer’s take on the theory of dominant design

Dominant design is what happens when one design becomes the standard, and marketing becomes more important. For any engineer, this can be uncomfortable.

Learn, unlearn and relearn: the changing face of your engineering career

A successful engineering career is now less about going deep on one particular skill, but being open to learning newer technologies more quickly. We explore how to build an engineering career in 2018.

Understanding AI: How we taught computers natural language

ChatGPT has revolutionized the world’s perception of AI, but how did it master human language? Discover the key milestones that led us here.

FloydHub AI Blog

Using Sentence Embeddings to Automate Customer Support, Part One

Most of your customer support questions have already been asked. Learn how to use sentence embeddings to automate your customer support with AI. Most of your company’s customer support questions have already been asked and answered. You’re not alone, either. Amazon recently reported that up to 70% of their

Using NLP to Automate Customer Support, Part Two

Let’s build an NLP model that can help out your customer support agents by suggesting previously-asked, similar questions. Let’s build a natural language processing (NLP) model that can help out your customer support agents by suggesting previously-asked, similar questions. In our last post, we learned how to use one-hot encodings

Tokenizers: How machines read

We will cover often-overlooked concepts vital to NLP, such as Byte Pair Encoding, and discuss how understanding them leads to better models.

Ten trends in Deep learning NLP

Let’s uncover the Top 10 NLP trends of 2019.

When Not to Choose the Best NLP Model

The world of NLP already contains an assortment of pre-trained models and techniques. This article discusses how to best discern which model will work for your goals.

NLP Datasets: How good is your deep learning model?

With the rapid advance in NLP models we have outpaced out ability to measure just how good they are at human level language tasks. We need better NLP datasets now more than ever to both evaluate how good these models are and to be able to tweak them for out own business domains.

Neptune AI Blog

Transformer Models for Textual Data Prediction - neptune.ai

Transformer models such as Google’s BERT and Open AI’s GPT3 continue to change how we think about Machine Learning (ML) and Natural Language Processing (NLP). Look no further than GitHub’s recent launch of a predictive programming support tool called Copilot. It’s trained on billions of lines of code, and claims to understand “the context you’ve […]

Unmasking BERT: The Key to Transformer Model Performance - neptune.ai

If you’re reading this article, you probably know about Deep Learning Transformer models like BERT. They’re revolutionizing the way we do Natural Language Processing (NLP). 💡 In case you don’t know, we wrote about the history and impact of BERT and the Transformer architecture in a previous post. These models perform very well. But why? […]

Understanding Vectors From a Machine Learning Perspective - neptune.ai

If you work in machine learning, you will need to work with vectors. There’s almost no ML model where vectors aren’t used at some point in the project lifecycle. And while vectors are used in many other fields, there’s something different about how they’re used in ML. This can be confusing. The potential confusion with […]

Wasserstein Distance and Textual Similarity - neptune.ai

In many machine learning (ML) projects, there comes a point when we have to decide the level of similarity between different objects of interest. We might be trying to understand the similarity between different images, weather patterns, or probability distributions. With natural language processing (NLP) tasks, we might be checking whether two documents or sentences […]

Word Embeddings: Deep Dive Into Custom Datasets

One of the most powerful trends in Artificial Intelligence (AI) development is the rapid advance in the field of Natural Language Processing (NLP). This advance has mainly been driven by the application of deep learning techniques to neural network architectures which has enabled models like BERT and GPT-3 to perform better in a range of […]

10 Things to Know About BERT and the Transformer Architecture

Few areas of AI are more exciting than NLP right now. In recent years language models (LM), which can perform human-like linguistic tasks, have evolved to perform better than anyone could have expected. In fact, they’re performing so well that people are wondering whether they’re reaching a level of general intelligence, or the evaluation metrics […]

Can GPT-3 or BERT Ever Understand Language?—The Limits of Deep Learning Language Models - neptune.ai

It’s safe to assume a topic can be considered mainstream when it is the basis for an opinion piece in the Guardian. What is unusual is when that topic is a fairly niche area that involves applying Deep Learning techniques to develop natural language models. What is even more unusual is when one of those […]

Transformer NLP Models (Meena and LaMDA): Are They “Sentient” and What Does It Mean for Open-Domain Chatbots? - neptune.ai

First of all, this is not a post about whether Google’s latest Deep Learning Natural Language Processing (NLP) model LaMDA is the real-life version of Hal-9000, the sentient Artificial Intelligence (AI) computer in 2001: A Space Odyssey. This is not to say that it is a pointless question to ask. Quite opposite, it is a […]

Weights and Biases AI Blog

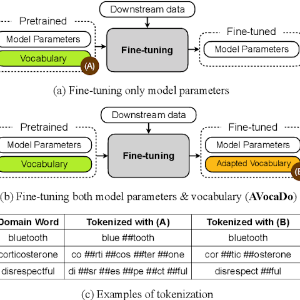

Transformers, tokenizers and the in-domain problem

What happens when generally trained tokenizers lack knowledge of domain specific vocabulary? How much of a problem is this for models like BERT?.