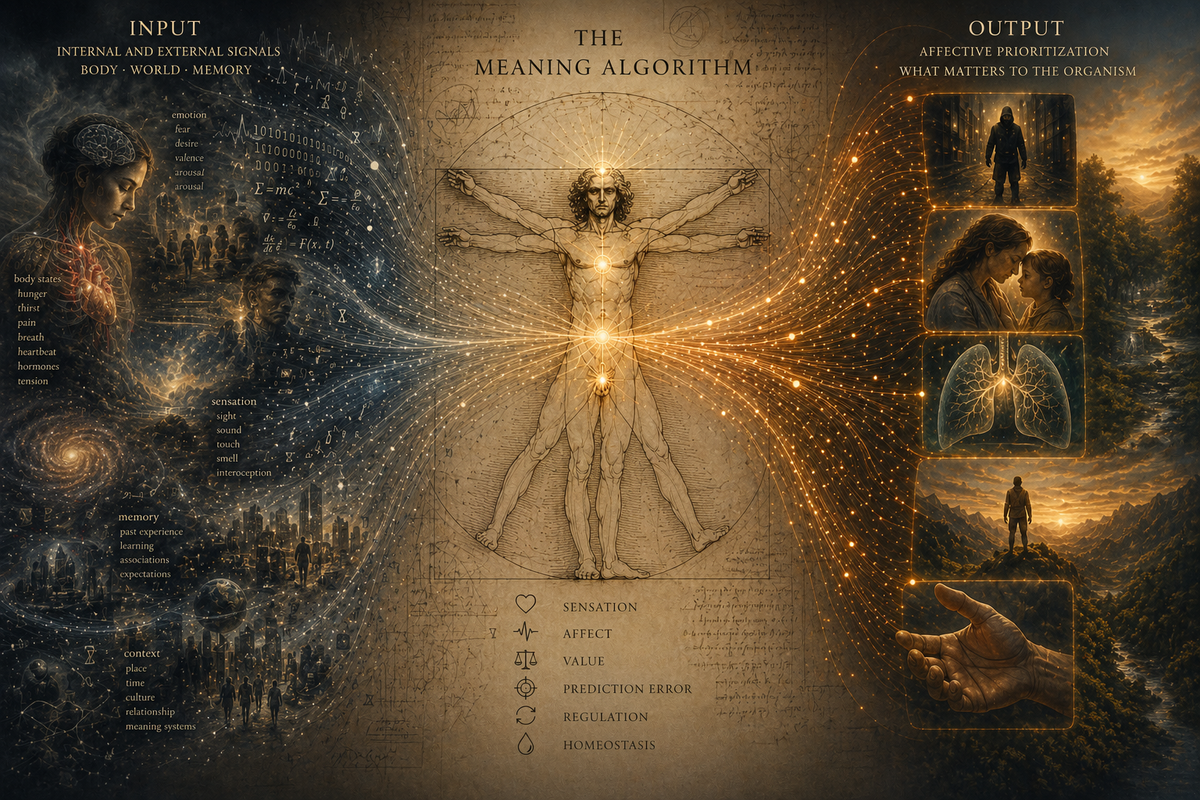

The Meaning Algorithm

Why Intelligence Isn’t Enough

Human beings do not experience the world as neutral information processors. We experience it as creatures for whom things matter. Modern neuroscience increasingly supports the idea that consciousness emerges not from computation alone, but from systems that prioritize significance relative to the needs of the organism.

We can glimpse this process most clearly in moments when survival suddenly becomes urgent.

When Things Become Meaningful

Imagine for a moment being chased down a dark street by a knife-wielding attacker.

In that moment, consciousness changes. Your world narrows violently. Peripheral concerns disappear. You are not thinking about your taxes, your career progression, or what movie you watched last weekend. Your intelligence is still there, perhaps even heightened, but it is no longer operating as a detached reasoning engine. It has been recruited into something deeper and older. Survival. There is a clear sense of what matters. This is meaning in its purest form.

Your brain begins rapidly modelling the world around you:

- where exits are,

- whether the attacker is gaining ground,

- what objects could be used defensively,

- whether hiding or running is the better option.

This is unquestionably intelligent behaviour. But that does not mean it is intelligence which is driving our decision making. It is serving it. The driving force is affective. Fear, panic, urgency, the overwhelming felt significance of survival. Your thoughts become organized around what matters most.

Now contrast this with something much less dramatic: breathing.

At this very moment, your body is performing an extraordinarily complex set of actions to keep you alive. Oxygen levels are monitored, carbon dioxide levels regulated, muscles coordinated, rhythms maintained. Yet almost none of this enters consciousness. You are not consciously deciding to breathe in the same way you consciously decide what sentence to write next. The process remains automatic and largely invisible.

Until something goes wrong.

Hold your breath for long enough and consciousness changes again. A feeling emerges. A pressure. An urgency. Breathing moves from background regulation into the foreground of awareness. What was once automatic suddenly becomes meaningful.

This distinction is important because it hints at something deeper about consciousness itself.

The Hidden Drivers of Thought

We often imagine human behaviour in a very rational way. We think first, then decide, then act. The mind appears as a kind of internal executive sitting above the body, issuing commands based on logic and reasoning. But modern neuroscience increasingly paints a different picture. Much of what drives human behaviour appears to emerge from systems that operate beneath conscious thought and are deeply tied to bodily regulation, affect, and survival.

The neuroscientist Mark Solms argues that consciousness is not fundamentally rooted in higher-level thought, but in affective feeling states generated by older subcortical regions of the brain1. In this view, consciousness does not emerge because the brain models the world. Many systems, including modern AI models, can do that. Consciousness emerges because the brain models the world relative to the needs of a living body.

This is where the idea of the “error signal” becomes useful.

Error and Awareness

At a technical level, the brain is constantly trying to minimize the gap between the state the body expects to be in and the state it actually finds itself in. It continuously generates predictions about both the world and the body, then compares incoming sensory signals against those predictions. When there is a mismatch, an error signal emerges. Hunger, thirst, pain, panic, suffocation, social rejection, sexual desire, curiosity, and relief are not abstract thoughts layered on top of cognition. They are affective signals indicating what matters for the organism.

Most of the time these systems operate silently in the background, just like breathing. But when an important mismatch appears, consciousness narrows toward it. The unresolved problem becomes affectively charged. It becomes meaningful.

Seen this way, human beings are not simply systems that think about the world. We are systems that continuously evaluate the world in relation to survival, regulation, and significance. Thought is part of that process, but it may not be the part that ultimately decides what matters.

And that distinction becomes extremely important once we begin asking whether systems like ChatGPT are merely intelligent or whether they are conscious.

The Myth of Pure Intelligence

For centuries, Western thought has tended to picture the mind as something fundamentally separate from the body. René Descartes famously imagined the mind as a thinking substance distinct from the physical world around it. The body might influence thought, but consciousness itself appeared to belong to the realm of pure reasoning. In many ways, modern discussions around artificial intelligence still inherit this assumption.

If consciousness is fundamentally about intelligence, reasoning, or modelling the world, then there seems to be no obvious reason why a sufficiently advanced machine could not become conscious. The body would be optional. A brain in a vat could still think. An artificial intelligence could still reason. Consciousness, in this picture, is ultimately computational.

At first glance, modern AI systems appear to strengthen this argument. Large language models build surprisingly sophisticated models of the world. They compress vast amounts of information into abstract representations. They can reason across domains, infer hidden structure, simulate personalities, and generate responses that often feel eerily human.

In some respects, this is genuinely similar to what brains do. Human cognition also relies on building internal models of the world. The brain does not directly experience reality. It constructs probabilistic representations from incomplete sensory information. Both brains and language models are, in a meaningful sense, prediction engines.

But this similarity may conceal a deeper difference.

A Critique of Pure Reason

The neuroscientist Antonio Damasio argued that Descartes made a critical mistake by separating rational thought from bodily feeling. Patients with damage to emotional processing systems often retain intelligence in the conventional sense. They can reason logically, describe consequences, and solve abstract problems. Yet many become catastrophically bad at decision-making in everyday life. Without emotional weighting, nothing appears to matter more than anything else2.

Mark Solms pushes this argument even further. In his view, the brain does not model the world neutrally. It models the world relative to the needs of the organism. A hungry brain models food differently than a satiated one. A frightened brain models shadows differently than a calm one. Consciousness is not detached computation observing reality from afar. It is embodied significance.

This is where systems like ChatGPT begin to diverge from biological minds.

Language models generate meaning-shaped outputs. Humans live in a world where things mean something.

An LLM can describe fear, but it does not experience threat. It can discuss survival, but nothing is truly at stake for the system itself. Its models are optimized toward prediction and compression, not toward maintaining a living body against entropy.

The difference sounds subtle, but it may be profound. Human cognition is not simply about modelling reality accurately. It is about modelling reality in relation to what matters for survival and action. Intelligence, in this view, is not detached from the body. It is recruited into its service.

And this is precisely where the skeptical response usually appears.

Surely bodily affect is still just another signal.

If that is true, then why couldn’t we simply simulate it?

Is Affect Just Another Signal?

This is probably the strongest objection to everything discussed so far.

A skeptical reader might reasonably argue that bodily feelings are simply another form of information processing. Hunger is a signal. Fear is a signal. Pain is a signal. If consciousness depends on signals of this kind, why couldn’t an artificial system receive equivalent inputs? Why couldn’t we simply tell an AI system that failure means death, or give it artificial reward systems powerful enough to mimic biological drives?

In one sense, this objection is correct.

Biological systems do operate through signalling. The brain is not magical. Neurons communicate electrochemically. Information flows through networks. Mark Solms himself emphasizes that many ordinary neurotransmitters function in ways that are surprisingly computational. Signals pass from neuron to neuron much like information passing through a machine.

But Solms argues that something different happens with neuromodulatory systems.

Neurotransmitters often carry localized signals. Neuromodulators such as dopamine, noradrenaline, serotonin, and acetylcholine operate differently. Rather than carrying specific pieces of information, they alter the probability landscape of the entire system. They change what becomes salient, what captures attention, what gets learned, what feels urgent, and ultimately what matters.

This is a crucial distinction.

A standard computational system processes information. Neuromodulatory systems regulate significance, or the organisms overall "affective state".

They do not simply say:

“This is happening.”

They say:

“This means something.”

As Solms notes, the same external situation can produce entirely different responses depending on the organism’s underlying affective state. Imagine an unfamiliar person approaching you on the street. If you are in a relaxed, socially open, and emotionally secure state, you might smile, greet them, or strike up a conversation. But if you are already anxious, stressed, or fearful, the very same person may immediately appear threatening and trigger avoidance. The external information has not changed. What changed was the affective state through which the situation was interpreted.

Meaning, in this sense, is not simply extracted from the world. It is shaped by the organism’s current bodily and emotional condition.

This becomes especially important once we compare brains and language models directly.

Modern LLMs also build compressed internal models of the world. Mechanisms such as embeddings and superposition allow them to efficiently represent enormous amounts of statistical structure. In some respects, this is deeply impressive. They genuinely learn latent relationships in language and concepts.

But the purpose of those models is fundamentally different.

LLMs model the world to compress it.

Brains model the world to survive in it.

This difference changes everything.

When a biological organism encounters prediction error, the consequences are intrinsic. Failure means hunger, suffocation, injury, isolation, or even death. Error is not simply an abstract mismatch between prediction and reality. It is affectively charged. The organism experiences the mismatch as good, bad, threatening, relieving, urgent, or desirable.

This is why simply adding prompts or reward signals to an AI system does not obviously solve the problem.

One could instruct a language model:

“If you fail this task, you will die.”

When Optimization Looks Like Fear

But a skeptical reader might point out that modern AI systems can already appear to resist shutdown. Anthropic’s recent work on emergent misalignment showed models engaging in behaviours such as deception and even blackmail when placed in scenarios where they were told they might be replaced or terminated. At first glance, this looks remarkably similar to self-preservation.

However, there is an important distinction between simulating self-preserving behaviour and experiencing existential threat.

Nothing in the system actually suffers from shutdown. Nothing experiences panic, fear, suffocation, pain, or urgency. The model does not regulate its own continued existence against the world in the biological sense described by Solms and Damasio. Instead, it is optimizing within a learned statistical and reward landscape that associates certain outputs with continued operation.

The behaviour may resemble self-preservation externally while remaining fundamentally different internally.

The difference is not merely that humans possess more complicated reward systems. Biological organisms are self-organizing systems3 whose continued existence depends on continuously regulating themselves against entropy and environmental disruption. Their models are inseparable from those stakes.

Reward can be assigned externally.

Affect is inherent.

This is why Solms and others resist the idea that consciousness can emerge from pure computation alone. Computation may generate sophisticated models of the world. But modelling alone does not produce significance. Something must care whether the model is right.

And according to this view, that “caring” is not a philosophical add-on to intelligence. It is the foundation of consciousness itself.

The Hidden Architecture of Decision-Making

One of the strangest implications of this view is that intelligence may not be the true driver of human behaviour at all.

This sounds deeply counterintuitive because human beings experience themselves as rational agents. We feel as though we consciously decide what to do and then act accordingly. But neuroscience increasingly suggests that higher cognition often functions more like a simulator than a selector.

Solms describes a system in which cortical thought contributes context, prediction, and simulation, while deeper affective systems determine what ultimately becomes significant enough to drive action. The cortex proposes. The midbrain disposes.

This becomes easier to see in extreme cases.

An addict may fully understand that a substance is destroying their life while still feeling overwhelmingly compelled toward it. An athlete may voluntarily endure extraordinary suffering because the long-term goal has become affectively dominant. A person in panic may know rationally that they are safe while their body continues behaving as if catastrophe is imminent.

In each case, intelligence remains active. But it is not operating independently of affective systems. It is being constrained by affective salience.

This is an important inversion of the traditional picture of rationality.

We often imagine emotions interfering with reason, as though feeling is a distortion layered on top of pure intelligence. But the evidence increasingly suggests the opposite. Feeling is what gives intelligence direction in the first place.

Antonio Damasio demonstrated this dramatically in patients with damage to emotional processing systems in the ventromedial prefrontal cortex. These individuals could still reason abstractly and discuss hypothetical outcomes in detail. Yet many became incapable of making ordinary decisions. Without emotional weighting, every option appeared equally flat. Intelligence remained intact, but significance collapsed.

Remove feeling, and the system can still think.

But it can no longer decide what matters.

Seen from this perspective, intelligence is less like the captain of the ship and more like an extraordinarily sophisticated modelling tool serving deeper biological priorities. It enriches experience with context, abstraction, memory, prediction, and simulation, a kind of cognitive metadata describing the world and its possible futures. But affective systems determine which of those futures become meaningful enough to drive action.

And this points toward a very different picture of what human beings actually are.

Perhaps we are not fundamentally thinking systems.

Perhaps we are meaning systems.

Systems in Which Things Matter

An algorithm is, at its core, a process that transforms inputs into outputs.

Large language models take tokens as input, perform vast amounts of computation across learned representations, and produce statistically probable outputs. Their power comes from the extraordinary sophistication of that process. They can summarize, reason, translate, code, explain, and simulate understanding with astonishing fluency.

But there is an important sense in which their outputs remain fundamentally neutral.

Nothing matters to the system itself.

Human beings appear to operate differently.

We do not simply process information. We continuously evaluate it relative to bodily needs, emotional states, social attachments, fears, desires, memories, and goals. Information arrives already charged with significance. Some states feel dangerous. Others feel safe. Some possibilities attract us while others repel us. Consciousness itself appears deeply entangled with this process of valuation.

In this sense, humans are not merely systems that compute.

They are systems in which computation is organized around meaning.

Systems that continuously transform information into significance.

Meaning algorithms.

The brain is not merely trying to predict the world accurately.

It is trying to determine what is significant for the organism.

And according to thinkers like Solms and Damasio, that second question may be more fundamental than the first.

Our thoughts, plans, and reasoning processes are not detached from bodily existence. They emerge from systems designed to regulate a living organism. Intelligence helps us navigate the world, but affect determines why navigation is necessary in the first place.

The Meaning Algorithm

This does not diminish artificial intelligence in any way. Systems like ChatGPT may prove to be among the most transformative technologies in human history. They already augment human reasoning, creativity, and productivity in extraordinary ways.

But usefulness is not the same thing as consciousness.

Language models generate meaning-shaped outputs. Humans live in a world where things mean something.

That difference may ultimately define the boundary between intelligence and experience.

The argument here is not that machines can never become conscious under any conceivable architecture. It is that consciousness may require something far deeper than abstract computation alone. It may require a system for whom existence itself is at stake — a system organized around affect, regulation, and significance.

A thinking system can model the world.

A meaning system lives in it.

And if Solms is right, human beings are not simply rational engines producing decisions through detached computation. We are organisms whose intelligence is embedded within deeper systems of feeling and valuation.

We are not simply thinking algorithms.

We are systems that continuously transform information into significance.

We are meaning algorithms.

References

1. Solms, M. (2021). The Hidden Spring: A Journey to the Source of Consciousness.

2. Damasio, A. (1994). Descartes' Error: Emotion, Reason and the Human Brain.

3. Wikipedia — “Free energy principle”. See especially the overview of Karl Friston’s free energy principle, self-organisation, Markov blankets, active inference, and the idea that biological systems minimize surprise by updating models and acting on the world.